You are currently browsing the tag archive for the ‘Complex Networks’ tag.

In order to solve hard combinatorial optimization problems (e.g. optimally scheduling students and teachers along a week plan on several different classes and classrooms), one way is to computationally mimic how ants forage the vicinity of their habitats searching for food. On a myriad of endless possibilities to find the optimal route (minimizing the travel distance), ants, collectively emerge the solution by using stigmergic signal traces, or pheromones, which also dynamically change under evaporation.

Current algorithms, however, make only use of a positive feedback type of pheromone along their search, that is, if they collectively visit a good low-distance route (a minimal pseudo-solution to the problem) they tend to reinforce that signal, for their colleagues. Nothing wrong with that, on the contrary, but no one knows however if a lower-distance alternative route is there also, just at the corner. On his global search endeavour, like a snowballing effect, positive feedbacks tend up to give credit to the exploitation of solutions but not on the – also useful – exploration side. The upcoming potential solutions can thus get crystallized, and freeze, while a small change on some parts of the whole route, could on the other-hand successfully increase the global result.

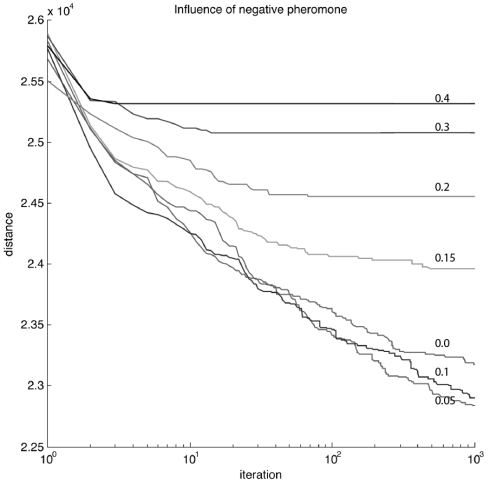

Figure – Influence of negative pheromone on kroA100.tsp problem (fig.1 – page 6) (values on lines represent 1-ALPHA). A typical standard ACS (Ant Colony System) is represented here by the line with value 0.0, while better results could be found by our approach, when using positive feedbacks (0.95) along with negative feedbacks (0.05). Not only we obtain better results, as we found them earlier.

Figure – Influence of negative pheromone on kroA100.tsp problem (fig.1 – page 6) (values on lines represent 1-ALPHA). A typical standard ACS (Ant Colony System) is represented here by the line with value 0.0, while better results could be found by our approach, when using positive feedbacks (0.95) along with negative feedbacks (0.05). Not only we obtain better results, as we found them earlier.

There is, however, an advantage when a second type of pheromone (a negative feedback one) co-evolves with the first type. And we decided to research for his impact. What we found out, is that by using a second type of global feedback, we can indeed increase a faster search while achieving better results. In a way, it’s like using two different types of evaporative traffic lights, in green and red, co-evolving together. And as a conclusion, we should indeed use a negative no-entry signal pheromone. In small amounts (0.05), but use it. Not only this prevents the whole system to freeze on some solutions, to soon, as it enhances a better compromise on the search space of potential routes. The pre-print article is available here at arXiv. Follows the abstract and keywords:

Vitorino Ramos, David M. S. Rodrigues, Jorge Louçã, “Second Order Swarm Intelligence” [PDF], in Hybrid Artificial Intelligent Systems, Lecture Notes in Computer Science, Springer-Verlag, Volume 8073, pp. 411-420, 2013.

Abstract: An artificial Ant Colony System (ACS) algorithm to solve general purpose combinatorial Optimization Problems (COP) that extends previous AC models [21] by the inclusion of a negative pheromone, is here described. Several Travelling Salesman Problem‘s (TSP) were used as benchmark. We show that by using two different sets of pheromones, a second-order co-evolved compromise between positive and negative feedbacks achieves better results than single positive feedback systems. The algorithm was tested against known NP complete combinatorial Optimization Problems, running on symmetrical TSPs. We show that the new algorithm compares favourably against these benchmarks, accordingly to recent biological findings by Robinson [26,27], and Grüter [28] where “No entry” signals and negative feedback allows a colony to quickly reallocate the majority of its foragers to superior food patches. This is the first time an extended ACS algorithm is implemented with these successful characteristics.

Keywords: Self-Organization, Stigmergy, Co-Evolution, Swarm Intelligence, Dynamic Optimization, Foraging, Cooperative Learning, Combinatorial Optimization problems, Symmetrical Travelling Salesman Problems (TSP).

Figure – Hybrid Artificial Intelligent Systems new LNAI (Lecture Notes on Artificial Intelligence) series volume 8073, Springer-Verlag Book [original photo by my colleague David M.S. Rodrigues].

Figure – Hybrid Artificial Intelligent Systems new LNAI (Lecture Notes on Artificial Intelligence) series volume 8073, Springer-Verlag Book [original photo by my colleague David M.S. Rodrigues].

New work, new book. Last week one of our latest works come out published on Springer. Edited by Jeng-Shyang Pan, Marios M. Polycarpou, Emilio Corchado et al. “Hybrid Artificial Intelligent Systems” comprises a full set of new papers on this hybrid area on Intelligent Computing (check the full articles list at Springer). Our new paper “Second Order Swarm Intelligence” (pp. 411-420, Springer books link) was published on the Bio-inspired Models and Evolutionary Computation section.

Video lecture – In this new RSA Animate, Manuel Lima, senior UX design lead at Microsoft Bing, explores the power of network visualisation to help navigate our complex modern world. Taken from a lecture given by Manuel Lima as part of the RSA’s free public events programme.

Network visualization has experienced a meteoric rise in the last decade, bringing together people from various fields and capturing the interest of individuals across the globe. As the practice continues to shed light on an incredible array of complex issues, it keeps drawing attention back onto itself. Manuel Lima is a Senior UX Design Lead at Microsoft Bing and founder of VisualComplexity.com, and was nominated as ‘one of the 50 most creative and influential minds of 2009’ by Creativity Magazine. He visits the RSA to explore a critical paradigm shift in various areas of knowledge, as we stop relying on hierarchical tree structures and turn instead to networks in order to properly map the inherent complexities of our modern world. The talk will showcase a variety of captivating examples of visualization and also introduce the network topology as a new cultural meme. (from RSA, lecture link).

Ever tried to solve a problem where its own problem statement is changing constantly? Have a look on our approach:

Vitorino Ramos, David M.S. Rodrigues, Jorge Louçã, “Spatio-Temporal Dynamics on Co-Evolved Stigmergy“, in European Conference on Complex Systems, ECCS’11, Vienna, Austria, Sept. 12-16 2011.

Abstract: Research over hard NP-complete Combinatorial Optimization Problems (COP’s) has been focused in recent years, on several robust bio-inspired meta-heuristics, like those involving Evolutionary Computation (EC) algorithmic paradigms. One particularly successful well-know meta-heuristic approach is based on Swarm Intelligence (SI), i.e., the self-organized stigmergic-based property of a complex system whereby the collective behaviors of (unsophisticated) entities interacting locally with their environment cause coherent functional global patterns to emerge. This line of research recognized as Ant Colony Optimization (ACO), uses a set of stochastic cooperating ant-like agents to find good solutions, using self-organized stigmergy as an indirect form of communication mediated by artificial pheromone, whereas agents deposit pheromone-signs on the edges of the problem-related graph complex network, encompassing a family of successful algorithmic variations such as: Ant Systems (AS), Ant Colony Systems (ACS), Max-Min Ant Systems (Max–Min AS) and Ant-Q.

Albeit being extremely successful these algorithms mostly rely on positive feedback’s, causing excessive algorithmic exploitation over the entire combinatorial search space. This is particularly evident over well known benchmarks as the symmetrical Traveling Salesman Problem (TSP). Being these systems comprised of a large number of frequently similar components or events, the principal challenge is to understand how the components interact to produce a complex pattern feasible solution (in our case study, an optimal robust solution for hard NP-complete dynamic TSP-like combinatorial problems). A suitable approach is to first understand the role of two basic modes of interaction among the components of Self-Organizing (SO) Swarm-Intelligent-like systems: positive and negative feedback. While positive feedback promotes a snowballing auto-catalytic effect (e.g. trail pheromone upgrading over the network; exploitation of the search space), taking an initial change in a system and reinforcing that change in the same direction as the initial deviation (self-enhancement and amplification) allowing the entire colony to exploit some past and present solutions (environmental dynamic memory), negative feedback such as pheromone evaporation ensure that the overall learning system does not stables or freezes itself on a particular configuration (innovation; search space exploration). Although this kind of (global) delayed negative feedback is important (evaporation), for the many reasons given above, there is however strong assumptions that other negative feedbacks are present in nature, which could also play a role over increased convergence, namely implicit-like negative feedbacks. As in the case for positive feedbacks, there is no reason not to explore increasingly distributed and adaptive algorithmic variations where negative feedback is also imposed implicitly (not only explicitly) over each network edge, while the entire colony seeks for better answers in due time.

In order to overcome this hard search space exploitation-exploration compromise, our present algorithmic approach follows the route of very recent biological findings showing that forager ants lay attractive trail pheromones to guide nest mates to food, but where, the effectiveness of foraging networks were improved if pheromones could also be used to repel foragers from unrewarding routes. Increasing empirical evidences for such a negative trail pheromone exists, deployed by Pharaoh’s ants (Monomorium pharaonis) as a ‘no entry‘ signal to mark unrewarding foraging paths. The new algorithm comprises a second order approach to Swarm Intelligence, as pheromone-based no entry-signals cues, were introduced, co-evolving with the standard pheromone distributions (collective cognitive maps) in the aforementioned known algorithms.

To exhaustively test his adaptive response and robustness, we have recurred to different dynamic optimization problems. Medium-size and large-sized dynamic TSP problems were created. Settings and parameters such as, environmental upgrade frequencies, landscape changing or network topological speed severity, and type of dynamic were tested. Results prove that the present co-evolved two-type pheromone swarm intelligence algorithm is able to quickly track increasing swift changes on the dynamic TSP complex network, compared to standard algorithms.

Keywords: Self-Organization, Stigmergy, Co-Evolution, Swarm Intelligence, Dynamic Optimization, Foraging, Cooperative Learning, Combinatorial Optimization problems, Dynamical Symmetrical Traveling Salesman Problems (TSP).

Fig. – Recovery times over several dynamical stress tests at the fl1577 TSP problem (1577 node graph) – 460 iter max – Swift changes at every 150 iterations (20% = 314 nodes, 40% = 630 nodes, 60% = 946 nodes, 80% = 1260 nodes, 100% = 1576 nodes). [click to enlarge]

David M.S. Rodrigues, Jorge Louçã, Vitorino Ramos, “From Standard to Second Order Swarm Intelligence Phase-space maps“, in European Conference on Complex Systems, ECCS’11, Vienna, Austria, Sept. 12-16 2011.

Abstract: Standard Stigmergic approaches to Swarm Intelligence encompasses the use of a set of stochastic cooperating ant-like agents to find optimal solutions, using self-organized Stigmergy as an indirect form of communication mediated by a singular artificial pheromone. Agents deposit pheromone-signs on the edges of the problem-related graph to give rise to a family of successful algorithmic approaches entitled Ant Systems (AS), Ant Colony Systems (ACS), among others. These mainly rely on positive feedback’s, to search for an optimal solution in a large combinatorial space. The present work shows how, using two different sets of pheromones, a second-order co-evolved compromise between positive and negative feedback’s achieves better results than single positive feedback systems. This follows the route of very recent biological findings showing that forager ants, while laying attractive trail pheromones to guide nest mates to food, also gained foraging effectiveness by the use of pheromones that repelled foragers from unrewarding routes. The algorithm presented here takes inspiration precisely from this biological observation.

The new algorithm was exhaustively tested on a series of well-known benchmarks over hard NP-complete Combinatorial Optimization Problems (COP’s), running on symmetrical Traveling Salesman Problems (TSP). Different network topologies and stress tests were conducted over low-size TSP’s (eil51.tsp; eil78.tsp; kroA100.tsp), medium-size (d198.tsp; lin318.tsp; pcb442.tsp; att532.tsp; rat783.tsp) as well as large sized ones (fl1577.tsp; d2103.tsp) [numbers here referring to the number of nodes in the network]. We show that the new co-evolved stigmergic algorithm compared favorably against the benchmark. The algorithm was able to equal or majorly improve every instance of those standard algorithms, not only in the realm of the Swarm Intelligent AS, ACS approach, as in other computational paradigms like Genetic Algorithms (GA), Evolutionary Programming (EP), as well as SOM (Self-Organizing Maps) and SA (Simulated Annealing). In order to deeply understand how a second co-evolved pheromone was useful to track the collective system into such results, a refined phase-space map was produced mapping the pheromones ratio between a pure Ant Colony System (where no negative feedback besides pheromone evaporation is present) and the present second-order approach. The evaporation rate between different pheromones was also studied and its influence in the outcomes of the algorithm is shown. A final discussion on the phase-map is included. This work has implications in the way large combinatorial problems are addressed as the double feedback mechanism shows improvements over the single-positive feedback mechanisms in terms of convergence speed and on major results.

Keywords: Stigmergy, Co-Evolution, Self-Organization, Swarm Intelligence, Foraging, Cooperative Learning, Combinatorial Optimization problems, Symmetrical Traveling Salesman Problems (TSP), phase-space.

Fig. – Comparing convergence results between Standard algorithms vs. Second Order Swarm Intelligence, over TSP fl1577 (click to enlarge).

Fig. – Comparing convergence results between Standard algorithms vs. Second Order Swarm Intelligence, over TSP fl1577 (click to enlarge).

The importance of Network Topology again… at [PLoS]. Abstract: […] The performance of information processing systems, from artificial neural networks to natural neuronal ensembles, depends heavily on the underlying system architecture. In this study, we compare the performance of parallel and layered network architectures during sequential tasks that require both acquisition and retention of information, thereby identifying tradeoffs between learning and memory processes. During the task of supervised, sequential function approximation, networks produce and adapt representations of external information. Performance is evaluated by statistically analyzing the error in these representations while varying the initial network state, the structure of the external information, and the time given to learn the information. We link performance to complexity in network architecture by characterizing local error landscape curvature. We find that variations in error landscape structure give rise to tradeoffs in performance; these include the ability of the network to maximize accuracy versus minimize inaccuracy and produce specific versus generalizable representations of information. Parallel networks generate smooth error landscapes with deep, narrow minima, enabling them to find highly specific representations given sufficient time. While accurate, however, these representations are difficult to generalize. In contrast, layered networks generate rough error landscapes with a variety of local minima, allowing them to quickly find coarse representations. Although less accurate, these representations are easily adaptable. The presence of measurable performance tradeoffs in both layered and parallel networks has implications for understanding the behavior of a wide variety of natural and artificial learning systems. […] (*)

(*) in Hermundstad AM, Brown KS, Bassett DS, Carlson JM, 2011 Learning, Memory, and the Role of Neural Network Architecture. PLoS Comput Biol 7(6): e1002063. doi:10.1371/journal.pcbi.1002063

Drawing (page 5) – Riccardo Manzotti – A Process View of Reality – April 2008.

Drawing (page 5) – Riccardo Manzotti – A Process View of Reality – April 2008.

“To destroy variety at a scale, we need variety at another scale“, Yavni Bar-Yam, ICCS’11 – Int. Conference on Complex Systems, Boston, June 2011.

In “a process oriented externalist solution to the hard problem” (A Process View of Reality, 2008), an 8 page comic series (pdf link) about the Mind-Body problem, or David Chalmers Hard Problem, Riccardo Manzotti asks: […] How can the conscious mind emerge out of physical stuff like the brain? Apparently, Science faces an unsolvable problem: The hard problem states that there is an unbridgeable gap between our conscious experience and the scientific description of the world. The modern version of the mind-body problem arose when the scholars of the XVII century suggested that reality is divided in the mental domain and in the physical domain […]. In the next 7 pages, Manzotti comes up with a possible solution, not far from what Science nowadays is doing, starting in the 1950’s: avoiding reductionism.

Video – “Be the one” track on Moby‘s Destroyed album. Destroyed is the tenth studio album by the American electronic artist Moby. It was released on 2011 by Mute Records. Network video animation by Taras Gesh.

From time to time some colleagues, students and friends do ask me what is a good introductory paper into Complex Networks. Indeed there are several, including some general books, but over the years I tend to point into “The Shortest Path to Complex Networks” by J.F.F. Mendes (thorough my life I met him several times now) and S.N. Dorogovtsev, released in 2004. For those who are beginners on the field, and do want to play or indeed program several concepts in the area, like clustering, small-worlds, edge condensation, cliques and communities as well as the preferential attachment (point 20.) among others, this is a rather simple and quick (besides is 25 pages) straightforward paper, well organized around 51 different entries, topics and concepts. Not all of them are here of course, like, among others, the age node issue which for several reasons tends to interest me. Point 1 starts with the birth of network science and it’s simple as : […] In 1735, in St Petersburg, Leonhard Euler solved the so-called Konigsberg bridge problem – walks on a simple small graph. This solution (actually, a proof) is usually considered as a starting point of the science of networks […]. The full paper is available through arXiv.org. Here is the link. Do enjoy. Like Moby says… be the one. The full reference list is also a must.

Figure – Understanding the Brain as a Computational Network: significant neuronal motifs of size 3. Most over-represented colored motifs of size 3 in the C. elegans complex neuronal network. Green: sensory neuron; blue: motor neuron; red: interneuron. Arrows represent direction that the signal travels between the two cells. (from Adami et al. 2011 [Ref. below])

Figure – Understanding the Brain as a Computational Network: significant neuronal motifs of size 3. Most over-represented colored motifs of size 3 in the C. elegans complex neuronal network. Green: sensory neuron; blue: motor neuron; red: interneuron. Arrows represent direction that the signal travels between the two cells. (from Adami et al. 2011 [Ref. below])

Abstract: […] Complex networks can often be decomposed into less complex sub-networks whose structures can give hints about the functional organization of the network as a whole. However, these structural motifs can only tell one part of the functional story because in this analysis each node and edge is treated on an equal footing. In real networks, two motifs that are topologically identical but whose nodes perform very different functions will play very different roles in the network. Here, we combine structural information derived from the topology of the neuronal network of the nematode C. elegans with information about the biological function of these nodes, thus coloring nodes by function. We discover that particular colorations of motifs are significantly more abundant in the worm brain than expected by chance, and have particular computational functions that emphasize the feed-forward structure of information processing in the network, while evading feedback loops. Interneurons are strongly over-represented among the common motifs, supporting the notion that these motifs process and transduce the information from the sensor neurons towards the muscles. Some of the most common motifs identified in the search for significant colored motifs play a crucial role in the system of neurons controlling the worm’s locomotion. The analysis of complex networks in terms of colored motifs combines two independent data sets to generate insight about these networks that cannot be obtained with either data set alone. The method is general and should allow a decomposition of any complex networks into its functional (rather than topological) motifs as long as both wiring and functional information is available. […] from Qian J, Hintze A, Adami C (2011) Colored Motifs Reveal Computational Building Blocks in the C. elegans Brain, PLoS ONE 6(3): e17013. doi:10.1371/journal.pone.0017013

[…] The role of evolution in producing these patterns is clear, said Adami. “Selection favors those motifs that impart high fitness to the organism, and suppresses those that work against the task at hand.” In this way, the efficient and highly functional motifs (such as the sensory neuron-interneuron-motor neuron motif) are very common in the nervous system, while those that would waste energy and give no benefit to, or even harm, the animal are not found in the network. “Adami and his team have used evolutionary computation to develop hypotheses about the evolution of neural circuits and find, for these nematode worms, that simplicity is the rule,” says George Gilchrist, program director in NSF’s Division of Environmental Biology (in the Directorate for Biological Sciences), which funds BEACON. “By including functional information about each node in the circuit, they have begun decoding the role of natural selection in shaping the architecture of neural circuits.” […] from Danielle J. Whittaker “Understanding the Brain as a Computational Network“, NSF, April 2011.

Drawing (Pedigree of Man, 1879) – Ernst Haeckel‘s “tree of life”, Darwin‘s metaphorical description of the pattern of universal common descent made literal by his greatest popularizer in the German scientific world. This is the English version of Ernst Haeckel‘s tree from the The Evolution of Man (published 1879), one of several depictions of a tree of life by Haeckel. “Man” is at the crown of the tree; for Haeckel, as for many early evolutionists, humans were considered the pinnacle of evolution.

“How we engage the dance between structure and flow becomes our unique creative signature“, ~ Michelle James, VA, USA, 2010.

[…] Abstract.: The problem of sending the maximum amount of flow q between two arbitrary nodes s and t of complex networks along links with unit capacity is studied, which is equivalent to determining the number of link-disjoint paths between s and t. The average of q over all node pairs with smaller degree kmin is <q>=kmin ~= c.kmin for large kmin with c a constant implying that the statistics of q is related to the degree distribution of the network. The disjoint paths between hub nodes are found to be distributed among the links belonging to the same edge-biconnected component, and q can be estimated by the number of pairs of edge-biconnected links incident to the start and terminal node. The relative size of the giant edge-biconnected component of a network approximates to the coefficient c. The applicability of our results to real world networks is tested for the Internet at the autonomous system level. […], in D.-S. Lee and H. Rieger, “Maximum flow and topological structure of complex networks“, EPL Journal, Europhysics Letters, Vol. 73, Number 3, p.471, 2006. [link] …

“Artificial Life 101” lesson nº1: emerge yourself a experimental “fire” and observe it carefully till the end.

… The other day I decided to work for almost 48 hours in a row and at the end… to sleep a few hours. When I woke up… – the day (e.g. “flow“) was almost gone, and night (e.g. “structure“) approaching fast -. So I decided, to grab the last sun rays… ; growing up, I guess…

Caption – 1st page of Erdös, P.; Rényi, A. (1960). “On The Evolution of Random Graphs“. Magyar Tud. Akad. Mat. Kutató Int. Közl. 5: 17 [PDF].

Caption – 1st page of Erdös, P.; Rényi, A. (1960). “On The Evolution of Random Graphs“. Magyar Tud. Akad. Mat. Kutató Int. Közl. 5: 17 [PDF].

Documentary film – “N is a Number: A Portrait of Paul Erdös” directed by George Csicsery | 1993 | 57 min. (9 parts)

“There are three signs of senility. The first sign is that a man forgets his theorems. The second sign is that he forgets to zip up. The third sign is that he forgets to zip down“, Paul Erdös.

Paul Erdös was an immensely prolific and notably eccentric Hungarian mathematician. Erdös published more papers than any other mathematician in history, working with hundreds of collaborators. He worked on problems in combinatorics, graph theory, number theory, classical analysis, approximation theory, set theory, and probability theory. He wrote around 1,475 mathematical articles in his lifetime, mostly with co-authors. He strongly believed in and practised mathematics as a social activity, having 511 different collaborators in his lifetime (source).

Having no home, while globe trotting the world from place to place, living mostly for short periods in friend’s and colleagues houses, almost as if he was travelling from one set of mathematics, to another, it was not only the quantity of his work which was tremendous, but mainly the quality and impact of his works. It was not rare, that a single paper, produced a entire brand new field of research. One of my favourite examples, it’s his paper with Rényi on random graphs (image caption above): Erdös, P.; Rényi, A. (1960). “On The Evolution of Random Graphs“. Magyar Tud. Akad. Mat. Kutató Int. Közl. 5: 17 [PDF]. Alone, this single 5 pages paper emerged a new science branch, critical in our times: Complex Networks. The work is now being cited +2500 times.

Fig. – Erdös and Rényi (1959, 1960, 1961) were the first to introduce the concept of random graphs in 1959. The simple model of a network involves taking some number of vertices, N and connecting nodes by selecting edges from the N(N-1)/2 possible edges at random (Albert and Barabási 2002; Newman 2003). Figure shows three random graphs where the probability of an edge being selected is p=0, p=0.1 and p=0.2. (a) Initially 20 nodes are isolated. (b) Pairs of nodes are connected with a probability of p of selecting an edge. In this case (b) p=0.1 , (c) p=0.2, notice how the nodes become quickly connected. The Erdös and Rényi random graph studies explore how the expected topology of the random graph changes as a function of the number of links. It has been shown that when the number of links is below 1/N, the graph is fragmented into small isolated clusters. Above this threshold the network becomes connected as one single cluster or giant component. We now know, that at the threshold the behaviour is indeterminate (Strogatz 2001). Random graphs also show the emergence of subgraphs (below). Erdös and Rényi explored the emergence of these structures, which form patterns such as trees, cycles and loops. Like the giant component, these subgraphs have distinct thresholds where they forming different patterns (below).

Fig. – Erdös and Rényi (1959, 1960, 1961) were the first to introduce the concept of random graphs in 1959. The simple model of a network involves taking some number of vertices, N and connecting nodes by selecting edges from the N(N-1)/2 possible edges at random (Albert and Barabási 2002; Newman 2003). Figure shows three random graphs where the probability of an edge being selected is p=0, p=0.1 and p=0.2. (a) Initially 20 nodes are isolated. (b) Pairs of nodes are connected with a probability of p of selecting an edge. In this case (b) p=0.1 , (c) p=0.2, notice how the nodes become quickly connected. The Erdös and Rényi random graph studies explore how the expected topology of the random graph changes as a function of the number of links. It has been shown that when the number of links is below 1/N, the graph is fragmented into small isolated clusters. Above this threshold the network becomes connected as one single cluster or giant component. We now know, that at the threshold the behaviour is indeterminate (Strogatz 2001). Random graphs also show the emergence of subgraphs (below). Erdös and Rényi explored the emergence of these structures, which form patterns such as trees, cycles and loops. Like the giant component, these subgraphs have distinct thresholds where they forming different patterns (below).

Fig. – Different subgraphs appear at varying threshold probabilities in a random graph (Albert and Barabási 2002)

Fig. – Different subgraphs appear at varying threshold probabilities in a random graph (Albert and Barabási 2002)

Remove one network edge and see what happens. Then, two… etc. This is the first illustration on Mark Buchanan “Nexus: Small-worlds and the ground-breaking science of networks” 2002 book – Norton, New York (Prelude, page 17), representing a portion of the food web for the Benguela ecosystem, located off the western coast of South Africa (from Peter Yodzis). For a joint review of 3 general books in complex networks, including Barabási‘s “Linked“, Duncan Watts “Small-Worlds” and Buchanan‘s “Nexus” pay a visit into JASSS – Journal of Artificial Societies and Social Simulation, ‘a review of three books’ entry by Frédéric Amblard (

Remove one network edge and see what happens. Then, two… etc. This is the first illustration on Mark Buchanan “Nexus: Small-worlds and the ground-breaking science of networks” 2002 book – Norton, New York (Prelude, page 17), representing a portion of the food web for the Benguela ecosystem, located off the western coast of South Africa (from Peter Yodzis). For a joint review of 3 general books in complex networks, including Barabási‘s “Linked“, Duncan Watts “Small-Worlds” and Buchanan‘s “Nexus” pay a visit into JASSS – Journal of Artificial Societies and Social Simulation, ‘a review of three books’ entry by Frédéric Amblard (

Recent Comments